How to build post-training AI Interfaces at the speed of R&D with Express Interface

Key takeaways

- Express Interface's drag-and-drop UI builder and Python engine let project managers and engineers deploy or update complex annotation interfaces in hours, unlike the days or weeks traditional frontend coordination required.

- The platform enables real-time interactivity via an in-browser Python runtime, allowing the interface to instantly respond to annotator inputs. This dynamic environment can hide or show fields, manage component state and enforce custom validation, ensuring it adapts to the specific research task's nuance and complexity.

- The tool uses an LLM-as-a-judge QC Agent for instant, structured feedback on submissions, integrating continuous quality assurance into the workflow. This eliminates batch review delays and scales without extensive human review.

- Express Interface is built to handle highly sophisticated AI training scenarios that require multi-modal interaction, including video annotation, agent evaluation, text-to-SQL and visual question answering (VQA).

Express Interface unlocks dynamic, interactive workflows that adapt instantly as your training methodologies evolve

In the hyper-competitive artificial intelligence (AI) landscape, model builders are intensely focused on accelerating development cycles and moving projects from concept to production as quickly as possible. As access to powerful open-source and commercial large language models (LLMs) becomes increasingly commoditized, the data used for fine-tuning is the primary source of competitive differentiation. When competing firms can access the same base model, the company that can best tailor that model to its unique business context using proprietary or highly curated data will create a superior and defensible AI product. Without high-quality data, even the most sophisticated research methodologies fall flat.

At TELUS Digital, quality is embedded as a design principle across every phase of the program lifecycle. This commitment begins with our execution platform, Fine-Tune Studio (FTS), where domain experts curate training data with purpose-built interfaces. FTS provides a comprehensive suite of native editors tailored to diverse machine learning research scenarios from aligning models with human preferences to agent evaluation tasks validating tool-calling trajectories and planning.

However as training and evaluation methodologies grow more sophisticated and continue to evolve, we recognized that our customers needed the ability to build custom, dynamic interfaces that adapt to their specific research context. To solve the interface velocity problem, we developed Express Interface, a new capability within Fine-Tune Studio that empowers project managers to design and deploy custom task interfaces on demand. Built on a composable, centralized UI with an intuitive visual block builder, Express Interface allows our project managers (PMs) to define UI layouts and craft project-specific labeling experiences that capture the full context of your task requirements. Express Interface empowers PMs to set up custom user experiences that react to user interactions in real-time, control block behavior dynamically, manage state across components and add validation rules that enforce quality at submission time. With access to environment context and full control over task execution, Express Interface ensures that annotators work within an environment precisely engineered for your research needs, resulting in higher-quality training data that reflects the exact nuance and complexity your models require.

Building flexible, event-driven workflows that adapt to any training or evaluation methodology

Research hypotheses evolve rapidly. Consider a reasoning evaluation project where the team pivoted multiple times as the project progressed, requiring constant annotation pipeline changes. Traditionally, each iteration took days of coordination with frontend engineers, testing and redeployment. Rather than rebuilding the interface, with Express Interface they can simply update validation rules and display fields through Python code, deploying changes within hours.

Express Interface enables forward deployed engineers to configure interfaces that bind directly to complex, nested JSON structures, define event-driven logic that responds to annotator input in real time and enforce task-specific validation rules. As experiment parameters change, the interface configuration can be updated and redeployed immediately, since every interface is versioned, diffable and shareable across projects.

What’s inside Express Interface

- Drag-and-drop UI builder:

- Component library: A wide variety of pre-built components spanning layout, input, display and action blocks enable any annotation use case. Each component is fully configurable through a properties panel, allowing teams to control labels, options, data bindings and visibility conditions directly from the interface.

- Layout editor: The drag-and-drop canvas enables forward-deployed engineers and project teams to visually arrange components and build task interfaces without writing frontend code. This eliminates the need for frontend engineering involvement in interface design.

- Dynamic data binding: Layouts support data binding to task input fields using JSONPath expressions, so annotators see content dynamically populated from the underlying job data. Whether displaying model outputs, nested metadata or array elements, the interface automatically pulls the right data for each task.

- Python-based Code Engine:

- An in-browser Python runtime sits alongside the UI builder, giving our forward-deployed engineers direct control over interface behavior, writing event handlers that respond to annotator input in real time, defining validation rules that block submission until required fields are completed and shaping the final JSON output structure.

- Interfaces support conditional logic and dynamic behavior, allowing elements to show, hide or update based on annotator actions. This enables complex, multistep workflows within a single cohesive task.

Embedding automated QC into your annotation workflow

Express Interface is designed to get projects live quickly. Paired with our LLM-as-a-judge quality control (QC) agent, teams can bring the same speed to quality assurance, as well.

Once a custom interface is configured, teams can integrate the QC Agent to provide annotators with real-time, structured feedback on their submissions. Rather than surfacing quality issues at the end of a batch review cycle and extending the feedback loop, the QC Agent evaluates submissions as they happen, guiding annotators toward improvements in the moment. This makes quality assurance a continuous, embedded part of the workflow rather than a separate step that follows it, covering both speed and quality in a single motion. A new workflow with a configured interface, defined QC rubrics and real-time feedback can be stood up within hours. As the project scales, quality standards remain consistent across the entire dataset, with the QC Agent handling the continuous validation layer that would otherwise require significant reviewer bandwidth.

Examples of Express Interface at work

Modern training workflows require tight integration between the model being trained, the content being evaluated, human expertise and quality assurance mechanisms. Consider several real-world examples:

Video annotation tasks

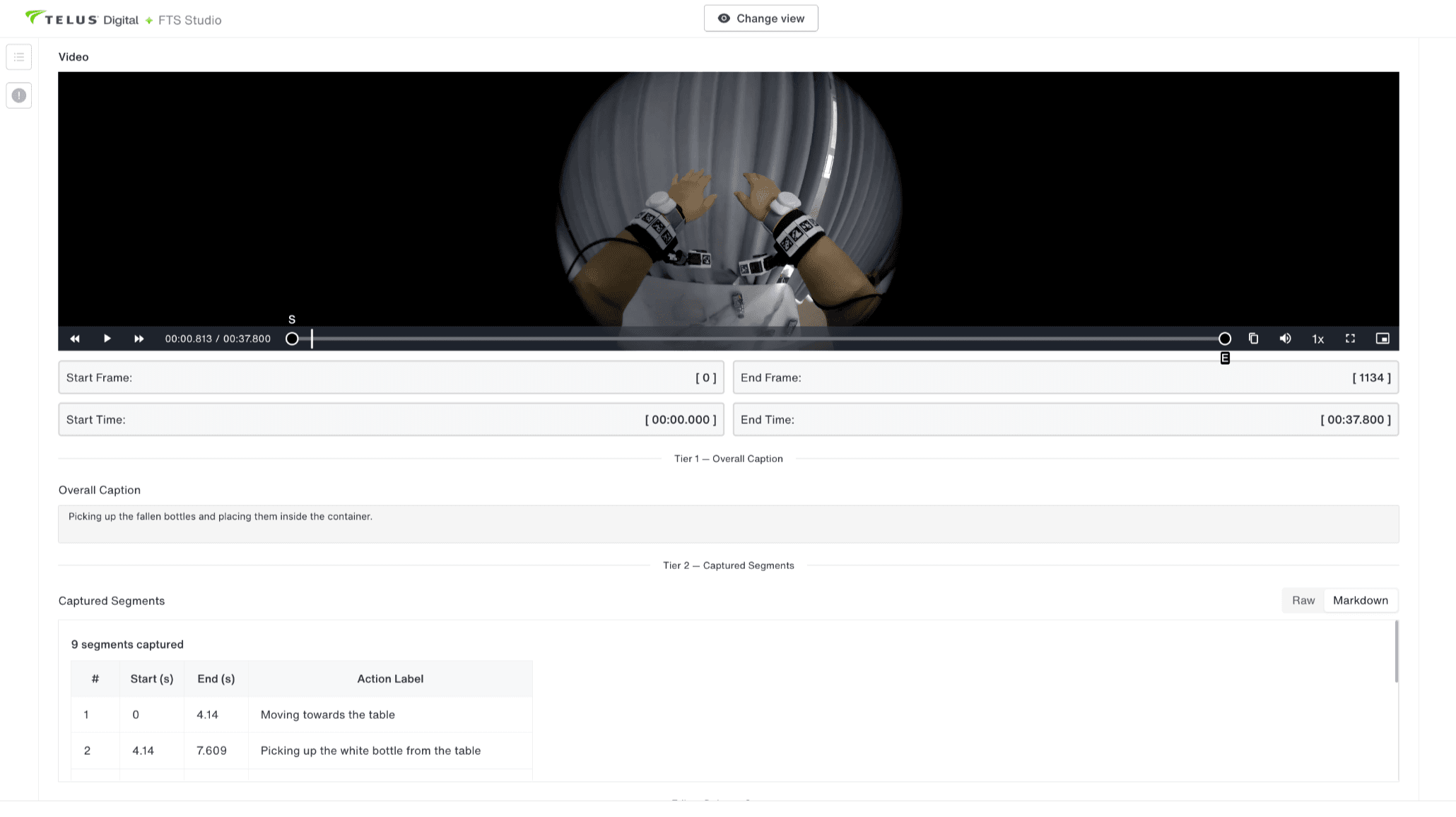

A semantic video labeling interface with key events and timestamps.

A semantic video labeling interface with key events and timestamps.Express Interface enables annotators to view segmented video content alongside structured annotation fields for detailed visual analysis. Whether you're labeling video segments, annotating temporal events or capturing frame-level feedback, Express Interface allows you to build interfaces that seamlessly integrate video playback with custom annotation logic. The unified context display prevents fatigue-induced errors by showing the full temporal sequence with real-time validation rules that ensure consistent frame boundaries and temporal event labeling.

Agent and model evaluation

Model comparison interface with two integrated model responses.

Model comparison interface with two integrated model responses.Comparative evaluation data is often the most subjective and error-prone. Evaluators must mentally juggle the prompt, multiple model outputs and rating criteria, leading to biased or shallow assessments. Without seeing all relevant information simultaneously, evaluators make uninformed judgments. Express Interface enables side-by-side evaluation workflows where annotators can assess different model outputs, compare reasoning approaches and provide detailed feedback in one interface. This unified view eliminates cognitive load and context-switching errors. Structured rating fields with validation enforce consistent evaluation criteria across all annotators.

Text-to-SQL

An interface to map natural language prompts to SQL queries.

An interface to map natural language prompts to SQL queries. Text-to-SQL is a critical capability for enterprise AI systems, enabling natural language interfaces to databases and data warehouses. Training high-quality models requires diverse, validated examples of natural language questions paired with syntactically correct SQL queries and their execution results. By enabling annotators to write, execute and validate queries in real-time against live databases, we can create ground-truth training data that reflects actual database schemas and query complexity.

The interface displays the database schema, accepts SQL query input from annotators and executes queries in real time to show results. Whether you're validating query correctness, comparing different approaches to the same question or collecting diverse query patterns for model training, the interface presents the schema context, query input field, execution button and formatted results in a unified view. The Python code engine handles database connectivity, query execution and result formatting which eliminates weeks of backend integration work and enables teams to focus entirely on data quality and model training.

The real competitive advantage isn't the model, it's how fast you can improve it

Across all these scenarios, the core requirement is the same: model interactivity is essential to training data generation. The interface must facilitate interaction between the AI system, the content being evaluated and human annotators, all within a single, cohesive workflow. By combining visual interface design with Python-driven logic, embedded quality assurance and real-time feedback mechanisms, Express Interface eliminates the traditional bottlenecks that slow projects from research to production. Contact our team of experts to explore how a customized solution can accelerate your model development while maintaining the data quality standards your frontier models demand.