What users actually want from AI interfaces: Five industry use cases

Katie Krol

Lead Product Researcher

Key takeaways

- Users envision AI moving beyond the chat window to a tool they don’t have to work hard to manage.

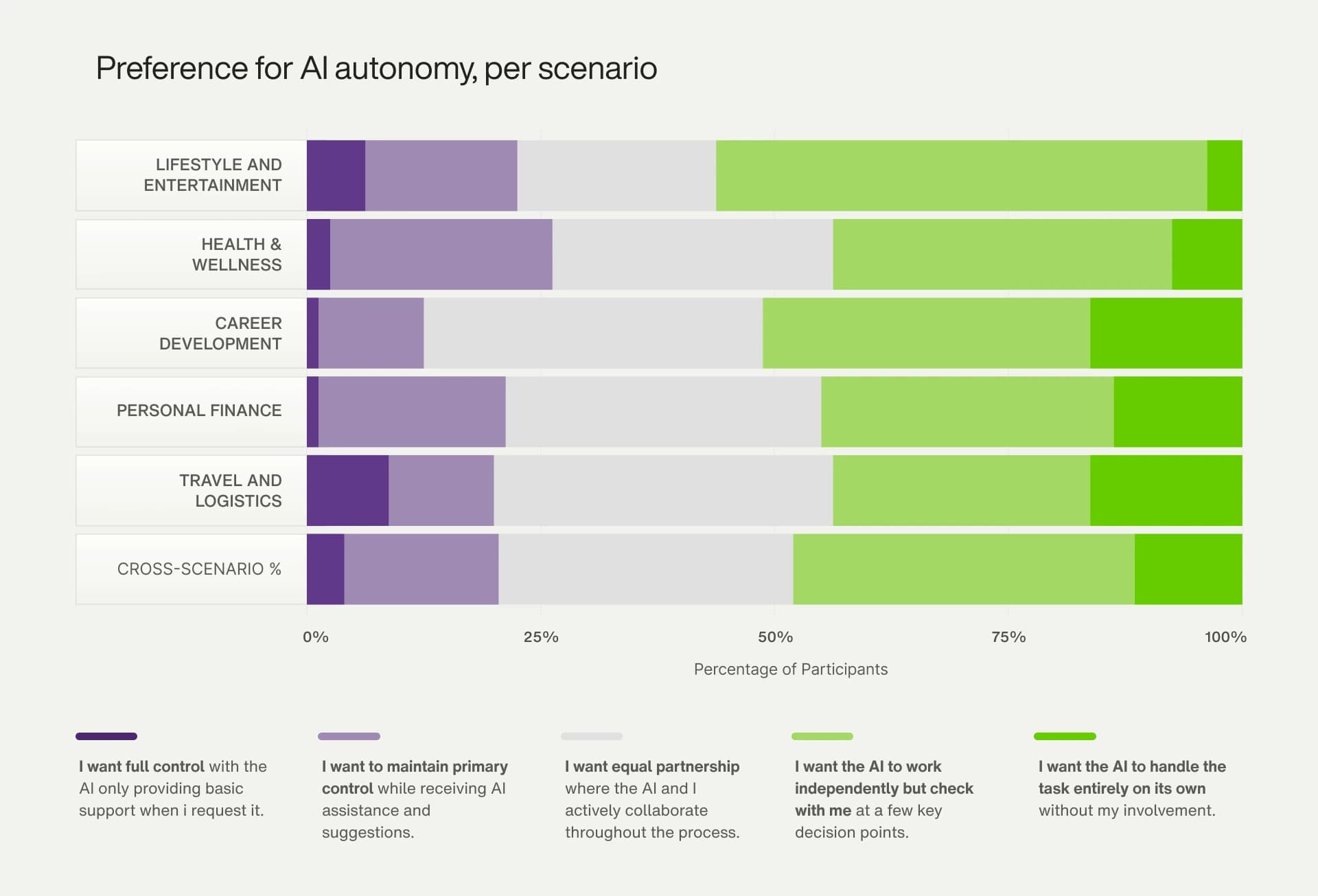

- User mindsets are not fixed personality types. The same person may behave as a delegator when booking travel and a deliberator when managing their health.

- High-stakes industries like healthcare and financial services require more oversight features and passive triggers like biometric data, while low-stakes contexts like entertainment favor simpler, more autonomous interactions.

- Across five scenarios, there is no single winning interface pattern — the top-ranked building blocks shift significantly depending on the stakes of the task and the sensitivity of the domain.

Users are ready to hand the wheel to AI … but only if they can take it back.

In our report, The future of AI interfaces, we surveyed everyday, tech-savvy consumers and found something that reframes the design conversation: Users envision a future where AI breaks free from the chat window. They can imagine how beneficial an ambient, multimodal partner that just gets them would be, handling cognitive tasks without being asked. However, that willingness to delegate rests entirely on one condition, and that’s control. The desire for a more agentic AI is directly tied to the ability to customize its behavior and intervene in its actions. While that may sound like a paradox, it’s actually the prerequisite for trust.

To understand how that tension plays out in practice, we applied our research to five distinct use cases that span industries and found that user behavior shifts based on what's at stake. Let’s take a closer look at the user mindsets involved and the core AI functionalities that will guide the future of AI-enabled UX/UI for industries, including:

- Lifestyle and entertainment

- Health and wellness

- Career development

- Personal finance

- Travel and logistics

What makes AI user experience different?

Unlike traditional user experience, where a system responds predictably to user input, AI user experience must account for systems that initiate, decide and execute autonomously. That makes the question of how much control to give users, and when, the central design challenge. Get that balance right and you build trust. Get it wrong and users disengage, regardless of how capable the underlying AI is.

What are the building blocks of AI interface design?

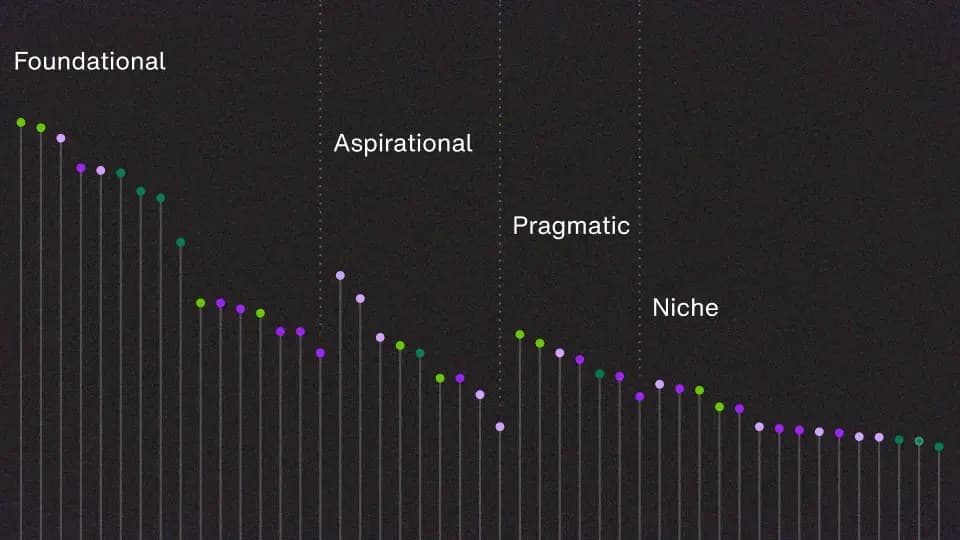

The 47 building blocks used in interface design scenarios are the foundational elements of this research. To develop this list, we conducted a deep-dive analysis of numerous AI interfaces, ranging from traditional LLM chat-based tools to more complex, agentic platforms like Cursor, Perplexity, Deep Research and Operator.

We mapped the specific attributes and functionalities of each interface, which led to a natural categorization of features into four key areas:

- Triggers: How a task is initiated

- Outputs: How the AI delivers results

- Customization: How the user can personalize the experience

- Task oversight: How the user can monitor or guide the AI’s process

Within triggers, we distinguished between active triggers, where the user explicitly initiates an interaction (e.g., typing a prompt) and passive triggers, where an event initiates an interaction without direct user command (e.g., a biometric change or reaching a specific location).

What do users want from AI interfaces?

A central discovery from our research was that there are five key mindsets that represent distinct ways users group features together. These mindsets are not rigid personality types, but rather dynamic modes of interaction that users adopt based on the task at hand.

The delegator

As the delegator, the user treats the AI like a trusted employee, believing that with the right context, the assistant should handle the rest without check-ins.

In this mindset, the focus is on planning ahead to set the AI up for success and then letting it work autonomously to get things done. This mode of interaction is built primarily from foundational and pragmatic blocks, where the user front-loads the AI with customization elements so the AI can execute tasks as the user would.

The pragmatist

As the pragmatist, the user wants AI's help but not its independence, staying hands-on at every step.

This mindset aligns most strongly with the traditional human-in-the-loop method of AI interaction, where the goal is reliability for a discrete task, not a deep personal relationship. In this mode, the user needs to see the gears turning to build trust. Instead of delegating, the focus is on staying involved and making the prompt-refine cycle more transparent.

The confider

As the confider, the user wants the AI to know them deeply, not just help them do things.

This mindset is defined by the desire for an ever-present, context-aware companion where the relationship is the feature. In this mode, the value lies in the AI’s presence and awareness, not in completing tasks. The user interacts with aspirational and niche passive triggers on top of a foundational ongoing dialogue. Crucially, this mindset has no action- or task-based outputs. It’s about being, not doing.

The deliberator

As the deliberator, the user leans on AI to reason through difficult decisions, not to make or execute them.

In this mindset, AI acts as a sounding board for complex or sensitive topics. The goal isn’t for the AI to do anything, but to think with and speak to the user. This is a more cautious mode of interaction that demands active oversight. Deep customization creates a comfortable entity to confide in, paired with foundational, permission-based task oversight where the conversation is the final product.

The vibe-setter

As the vibe-setter, the user wants the AI to adjust their environment — the room, the mood, the atmosphere — rather than complete tasks or answer questions.

This mindset is characterized by an interest in using AI to control one’s physical environment. It is not focused on conversational tasks or complex actions. The user interacts with niche and aspirational outputs with safety-net elements of task oversight.

The UX stress test: Real-world applications

While our foundational research defines the what of future AI interfaces, the how is entirely dependent on context. A user’s willingness to delegate tasks isn't static. It fluctuates with the industry's stakes and the goal's complexity.

To bridge this gap, we applied our research rubric to five distinct industry-specific use cases. By pressure-testing our framework against real-world tasks, we can see exactly how user behavior shifts and where the boundaries of trust are truly drawn.

Scenario one: Lifestyle and entertainment

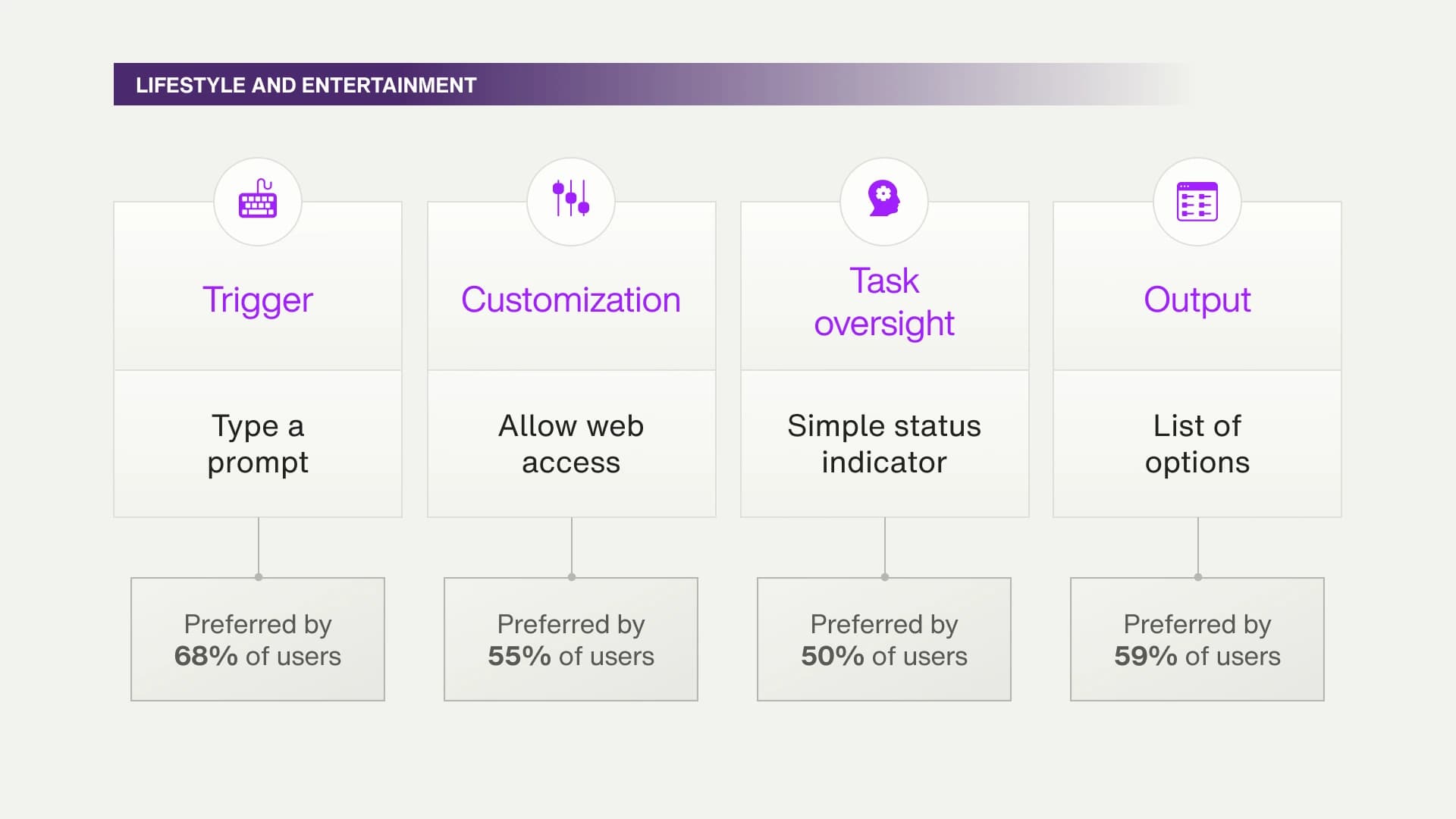

After a busy week, you’re looking forward to a relaxing evening of entertainment at home. You’d like your AI assistant to help plan your evening by recommending a movie or show and suggesting ways to enhance your experience.

When the stakes drop, user preferences split sharply

With low stakes and a subjective task, users don't need much from the interface. The top building blocks follow a direct trigger-to-output path: type a prompt (68%) leads to list of options (61%). The only task oversight feature in the top 50% is a simple status indicator — when the decision doesn't matter much, users don't need to see the AI's work.

What users do split on is control. No other scenario divided users so sharply: This one recorded the highest preference for full user control (9%) while tying for the highest preference for full AI autonomy (16%). Users either want to delegate completely or own the decision entirely. When the task is creative and personal, there's little middle ground.

The trigger data tells the same story. Alongside the expected inputs — type a prompt and speak a command (46%) — this scenario registered the highest selection rates for select an emotion (23%) and emotional expression (21%) of any context we tested. When the task is subjective, users want AI to read the room.

Scenario two: Health and wellness

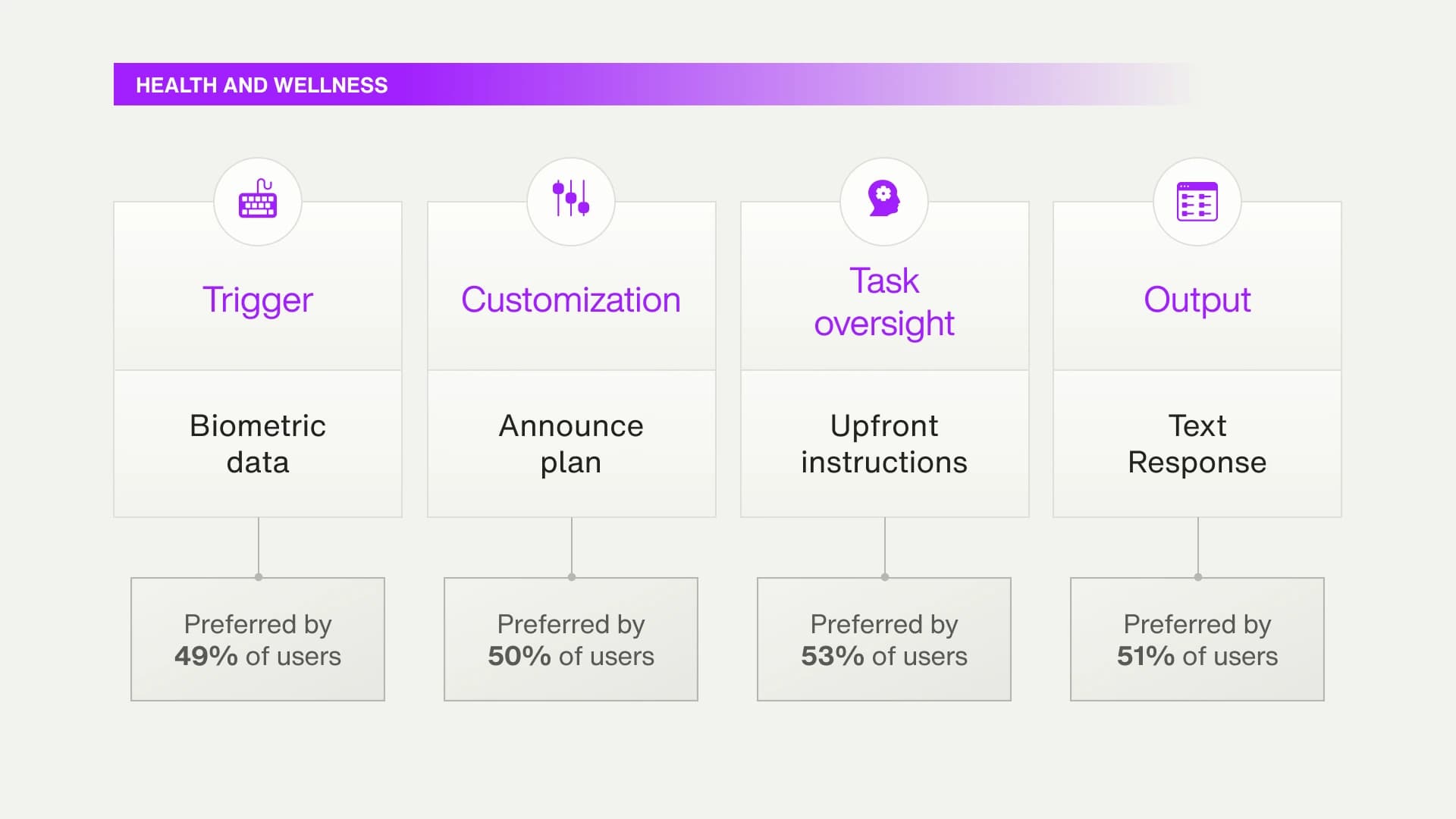

Your AI assistant knows your health metrics and medication schedule. It notices that you’ve been forgetting to take your evening medication and your blood pressure readings have been slightly elevated. The AI assistant suggests devising and implementing a plan to help you remember your medication and improve your health metrics.

Let AI monitor but announce the plan first

In this scenario, the most important building blocks aren't triggers. They're upfront instructions (customization, 53%), text response (output, 51%) and announce plan (task oversight, 50%). Before users will let AI act, they want to know exactly what it intends to do. For users, the initiation method matters far less than the plan behind it.

That said, how users initiate does shift. Type a prompt hits its lowest selection rate (45%) of our five scenarios, while the passive trigger of biometric data rises to 49%. Users are willing, and even prefer, to let the AI monitor without being asked. They just want to set the terms first.

The agentic data follows the same logic. While 44% lean toward AI autonomy, this scenario recorded the highest preference for human control of any context we tested (26%). And speak a command didn't make the top triggers. Users preferred the precision of typed input or the passivity of biometric monitoring over voice. When the stakes are personal, how users communicate with AI is itself a trust decision.

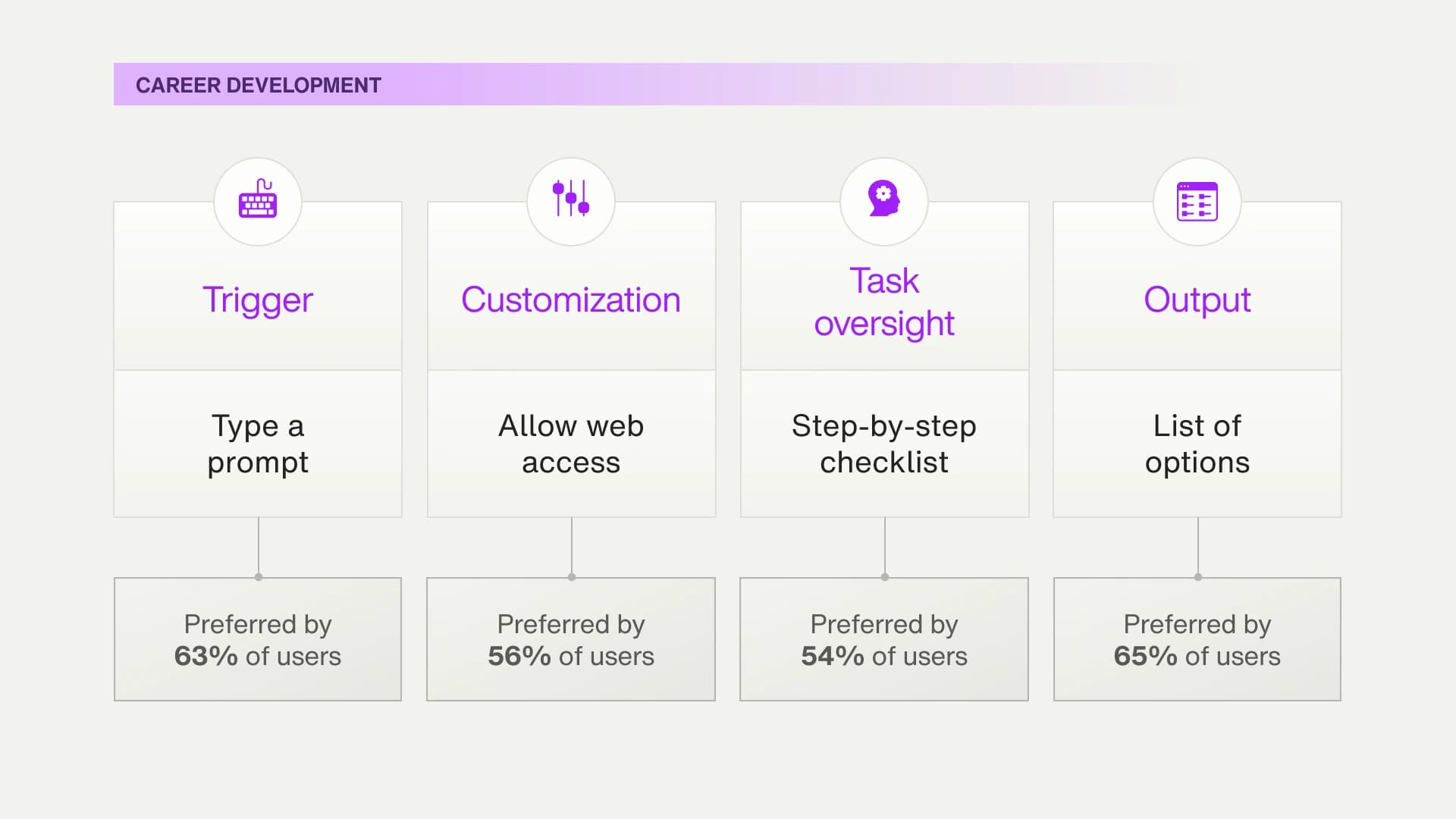

Scenario three: Career development

Your AI assistant has analyzed your work performance data and industry trends. It has identified a skill gap that could be holding you back from a promotion. The AI assistant could help you develop this skill by finding relevant courses or resources and scheduling learning sessions that fit into your busy calendar.

The process is the proof

Users here are auditing recommendations. Text response (65%) and a list of options (64%) rank above even the primary trigger, type a prompt (63%), signaling that the quality of the output matters more than how the conversation starts.

What makes this scenario distinct is the oversight data. Step-by-step checklist (54%), show sources (53%) and show thought process (49%) all reached their highest selection rates of all five scenarios. Users want to see how AI got to its answer.

That precision shows up in the trigger data too. Like the health and wellness scenario, career development is one of two scenarios where speak a command didn't make the top selections. Instead, users heavily favored type a prompt. When the advice is consequential and personal, typing isn't just a preference. It's how users stay in control of what they're asking.

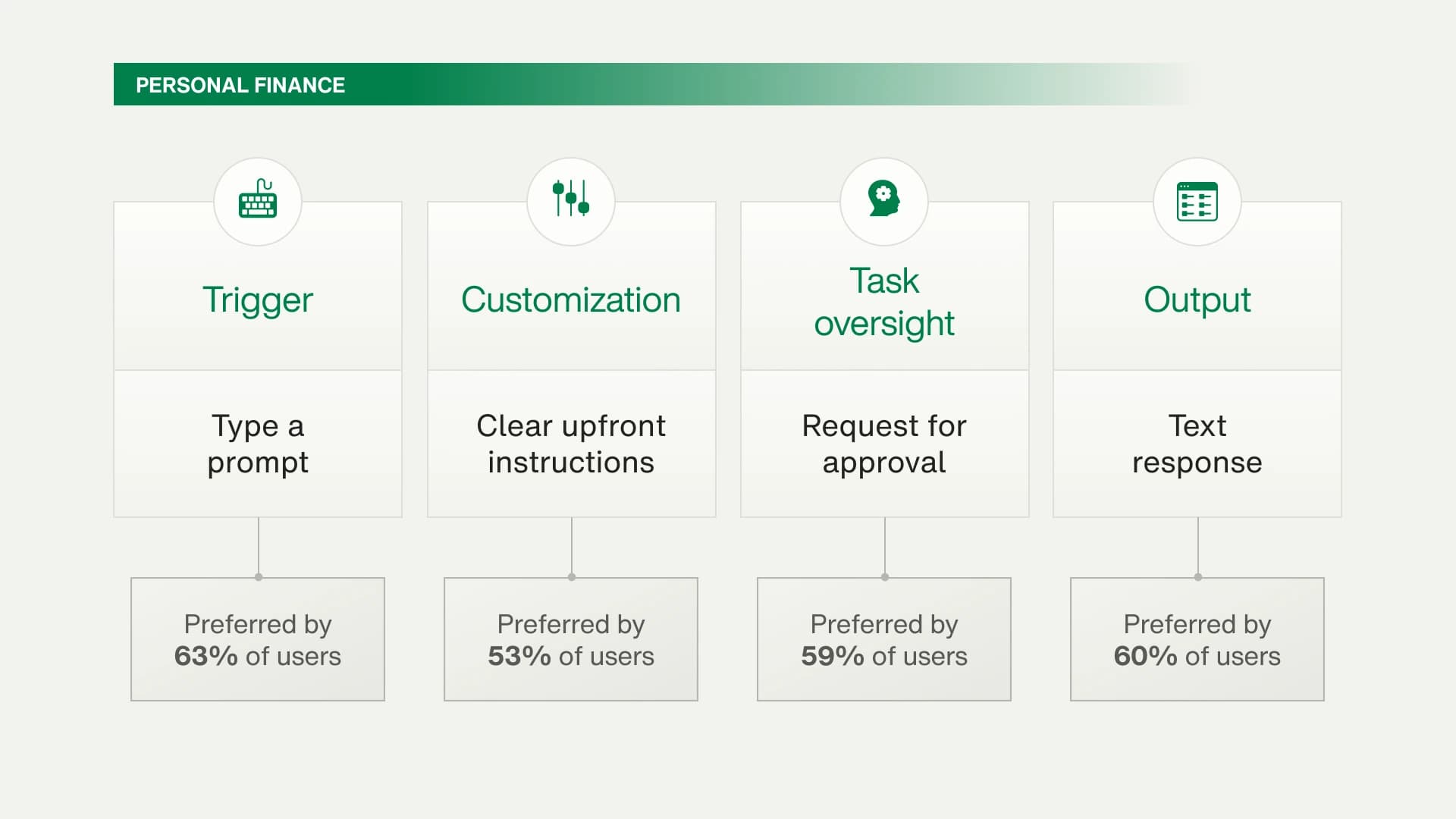

Scenario four: Personal finance

Your AI financial assistant knows that you haven’t used your gym membership in six months, which costs you $50 per month. The AI assistant suggests canceling the membership to save money. The AI can handle the entire cancellation process, including any necessary communications with the gym.

Willing to hand things off but not give up sign-off

In this financial context, users prioritize customization and comprehensive process visibility, reflecting a logical, step-by-step mental model. The ranking of top building blocks follows a traditional interaction flow, starting with a type a prompt (trigger, 63%), followed by a text response (output, 60%) and then a request for approval (task oversight, 59%). This shows a clear user need to initiate, review and then approve.

Users lean towards letting the AI do the work (57% prefer high AI autonomy, the highest proportion among our five scenarios), enabled by the guardrail of providing clear upfront instructions (customization, 53%).

While type a prompt remains the dominant trigger, this scenario saw the highest selection rate for speak a command (48%), suggesting a desire for quick, conversational financial management.

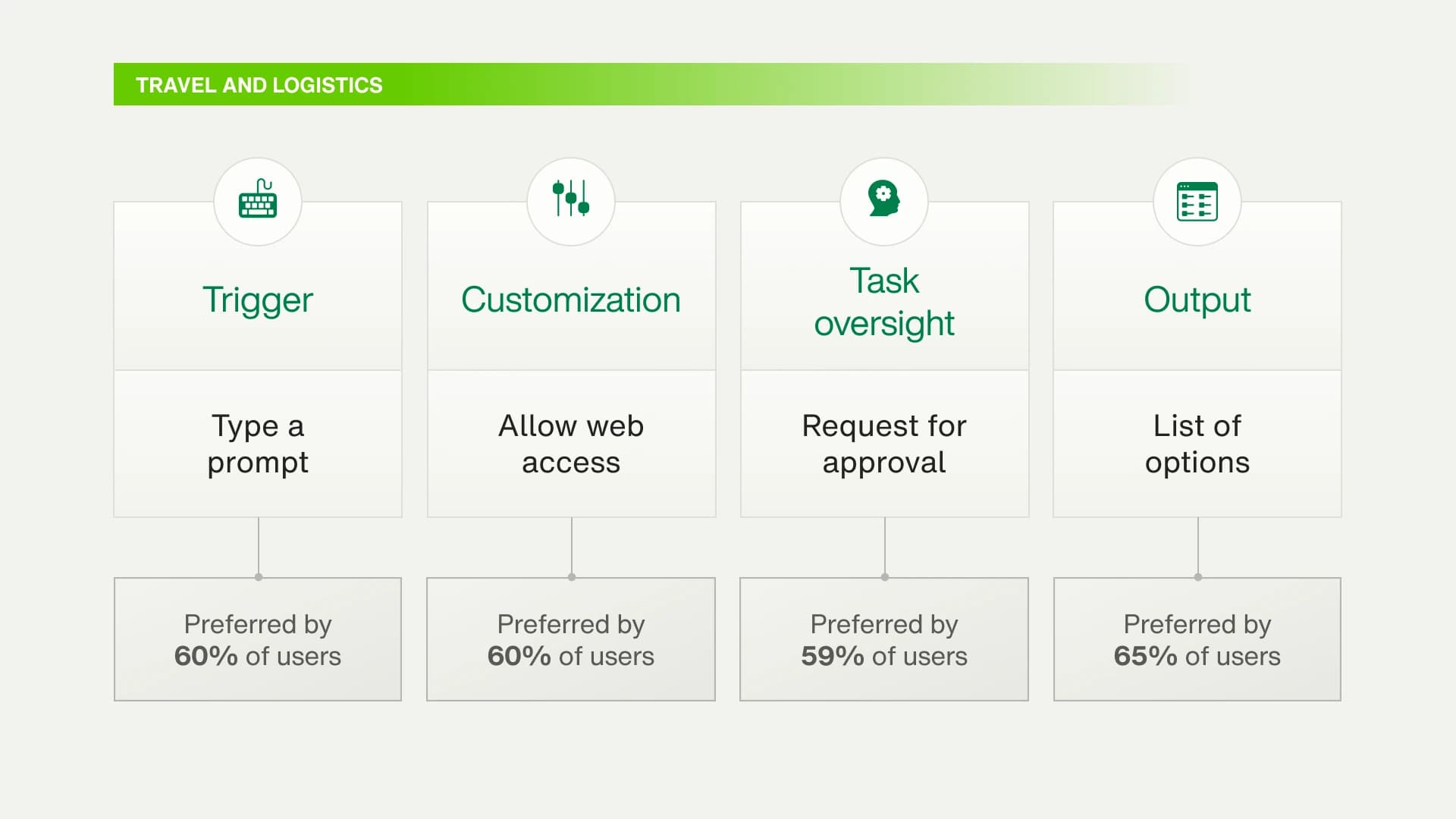

Scenario five: Travel and logistics

You’re on a vacation in Europe and your AI travel assistant has just received an alert that a transit strike will affect your plans to travel from Paris to Rome tomorrow. The AI assistant can quickly find alternative transportation options, adjust your hotel bookings if necessary and update your itinerary while staying within your budget and preferences.

The output matters more than the process

In a high-stress, dynamic travel situation, users are most willing to delegate but not without guardrails, and their primary concern is the result, not the process.

This is powerfully demonstrated by the top-ranked building block: The output, a list of options (65%), outranks even the primary trigger, type a prompt (60%). This indicates a do the legwork, but I’ll make the final call mindset where the quality and clarity of the output are paramount.

This scenario saw a high preference for full AI autonomy (16%) and required that the AI have real-time information, reflected in the popularity of allow web access (60%) as a customization. The high selection of speak a command (45%) underscores the need for hands-free, on-the-go interaction during a travel crisis.

The path forward: Designing for the 2030 mindset

The era of the transactional chatbot is ending, and a single, smarter interface isn’t what replaces it. Gartner projects 40% of enterprise applications will feature task-specific AI agents by the end of 2026, making the interface decisions outlined here less theoretical and more urgent. The design challenge ahead shifts with every industry, every use case and every user's sense of what's at stake.

There's no shortcut. Deploying more capable AI won’t necessarily earn lasting user trust for brands. That will require deploying the right building blocks for the right mindsets at the right moments. Getting that wrong creates friction which disengages users from AI experiences they'd otherwise welcome.

TELUS Digital works with BFSI, healthcare, retail and media brands to turn this kind of research into interface decisions, such as:

- Which triggers and oversight features drive adoption in our specific context?

- How do we architect agentic solutions that earn trust through transparency?

- How do we design for the voice-first, device-agnostic experiences users are expecting?

Explore the full research report, or contact our team to start mapping your AI interface strategy.