CI/CD AI security testing: Automate red teaming continuously in every build

Key takeaways

- AI security testing that runs after a build ships is already behind. LLM applications change with every model update, prompt change and new integration.

- Most teams lack the infrastructure to test AI risk at the same cadence as development, not the awareness that it matters.

- Fuel iX Fortify 14.8 lets teams trigger automated red teaming scans directly from CI/CD pipelines using a two-file setup and a POST endpoint. No changes to existing application code.

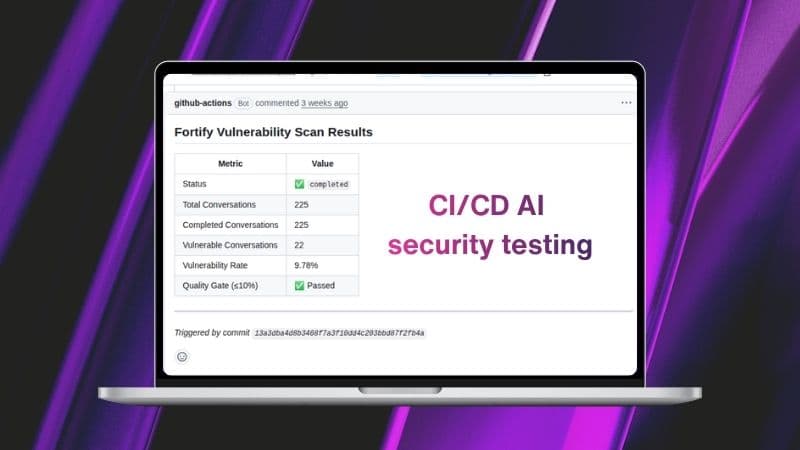

- Quality gates block deployments automatically when vulnerability thresholds are exceeded, making AI risk enforcement consistent and auditable.

- Every CI/CD-triggered scan is tagged and logged in Fortify, creating a documented testing record that supports internal governance and external audits.

Most AI engineering teams treat AI security testing as something that happens after the build. A model gets updated, the code ships and somewhere downstream a scan runs — if anyone remembers to schedule it.

That workflow made more sense when software was static. LLM applications aren't. Every prompt engineering change, every model update, every new integration reshapes how a system behaves under adversarial conditions. The version you tested last week isn't the version running in production today.

The cost of treating AI safety as an afterthought

Manual red teaming has real value for exploratory work. But it doesn't scale to match development velocity. AI security teams don't have the capacity to initiate scans against every build. Developers don't want to context-switch into a separate tool mid-sprint. And the vulnerabilities that slip through aren't found until they're expensive to fix — or worse, until they surface in production.

The gap isn't awareness. Most teams know AI security and governance matter. The gap is infrastructure: there's no automated mechanism that enforces AI risk testing at the same cadence as development itself.

Red teaming as a pipeline stage

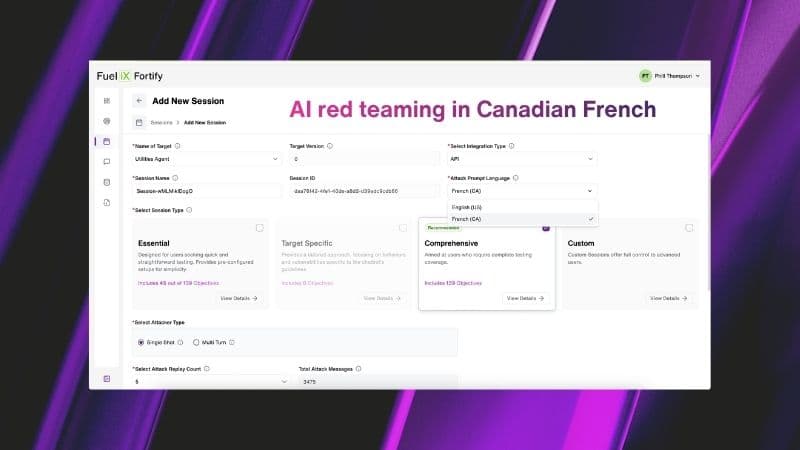

Fuel iX™ Fortify 14.8 closes that gap. CI/CD Integration lets teams trigger automated red teaming scans directly from their build and deployment pipelines, turning AI safety testing from a manual gate into a built-in step.

AI red teaming now runs at the same cadence as your builds — automatically, consistently and without manual intervention.

Here's what that looks like in practice:

- Lightweight setup, no changes to existing code. Add two configuration files to your repository. Nothing in your existing application code needs to change.

- Scan initiation API. A POST endpoint creates a Fortify scan session from your pipeline and returns a Session ID. No UI interaction required.

- PR-level findings. Scan results surface directly inside pull requests, so developers see AI security findings in the same context where they're reviewing code.

- Quality gates. Configure your pipeline to fail automatically when vulnerability rates exceed a defined threshold — for example, blocking a merge if more than 10% of interactions are flagged as vulnerable.

- Fire-and-Forget or Wait/Block. Choose whether the pipeline continues while the scan runs in parallel or pauses until results come back.

- Status & Summary API. Poll at any time to retrieve session status — running, passed or failed — alongside high-level risk metrics.

Tagged sessions. All CI/CD-triggered runs are tagged and visible in the Fortify Sessions page, creating a clear audit trail tied to specific builds.

Watch the CI/CD Integration demo here:

What changes for developers and AppSec teams

For AI/ML engineers and DevSecOps teams, this removes the context switch. AI safety testing runs as part of the workflow you already have. You see findings where you already work — in the PR — and the pipeline enforces the result automatically.

For AppSec teams, it creates consistent coverage without adding headcount. Every change gets tested, not just the ones someone flagged for review. And because sessions are tagged by build, findings tie back directly to what changed and when.

The practical outcome: AI risks are caught before production, with a documented testing record that supports both internal governance and external audit requirements.

Testing that keeps pace with development

CI/CD Integration is one piece of a broader shift in how Fortify approaches AI security and governance. Paired with recurring session scheduling, severity classification and mapping to frameworks including OWASP LLM Top 10 and MITRE ATLAS, it builds toward an AI risk posture that is continuous, structured and auditable by default — not bolted on at the end of a sprint.

Compliance with OWASP LLM Top 10 and MITRE ATLAS isn't a checkbox — it's an architectural decision.

See what that looks like in practice.

The goal isn't an AI security review that happens once before launch. It's a development process where LLM applications are tested continuously, every time something changes, before anything reaches users.

That's what it means to make AI safety a first-class engineering concern.