AI-first software delivery: The new standard for enterprises and how it works

Adam Shea

Sr. Director, Engineering

Key takeaways

- TELUS Digital is redefining the enterprise software standard by moving beyond incremental AI productivity to a fully integrated, AI-first delivery methodology.

- Shifting from siloed AI tasks to an organization-wide context layer dramatically resets the bar for delivery speed, quality and total cost of ownership.

- Re-engineering the software development lifecycle requires a fundamental shift in mechanics, transforming everything from initial client requirements to automated QA and team management.

- Transitioning to agentic workflows automates the administrative tax of leadership, allowing managers to refocus on high-value human judgment and team health.

The initial conversation around AI in software development, which was largely focused on incremental gains in individual productivity, is rapidly evolving. Faster coding doesn't automatically equal faster delivery, which is why TELUS Digital has been thinking bigger.

Over the past year, we’ve implemented a fundamental shift: from AI as a sidekick for individual tasks to AI as the connective tissue of our entire delivery organization. By re-engineering the structure of our teams, the definition of our roles and the very nature of the software development lifecycle (SDLC), we have moved from siloed AI usage to a new operational standard.

This guide is for the leader who needs to understand the mechanics of this shift. We’ll go behind the scenes of our AI-first framework to show how our jobs-to-be-done mindset evolves collaboration among design, code and context and enables us to deliver premium digital products at a pace previously thought impossible.

The foundational tools of AI-first software development

Before getting into use cases, it's worth understanding the tools underpinning our approach.

At the core is Fuel iX™, our in-house AI platform. Built to support chat, agents and Model Context Protocol (MCP) tool integrations, Fuel iX is self-hosted and secure, purpose-built for enterprise environments where data privacy and compliance aren't optional. Unlike off-the-shelf solutions, Fuel iX can connect to various underlying models and is designed to plug into the systems our teams already live in.

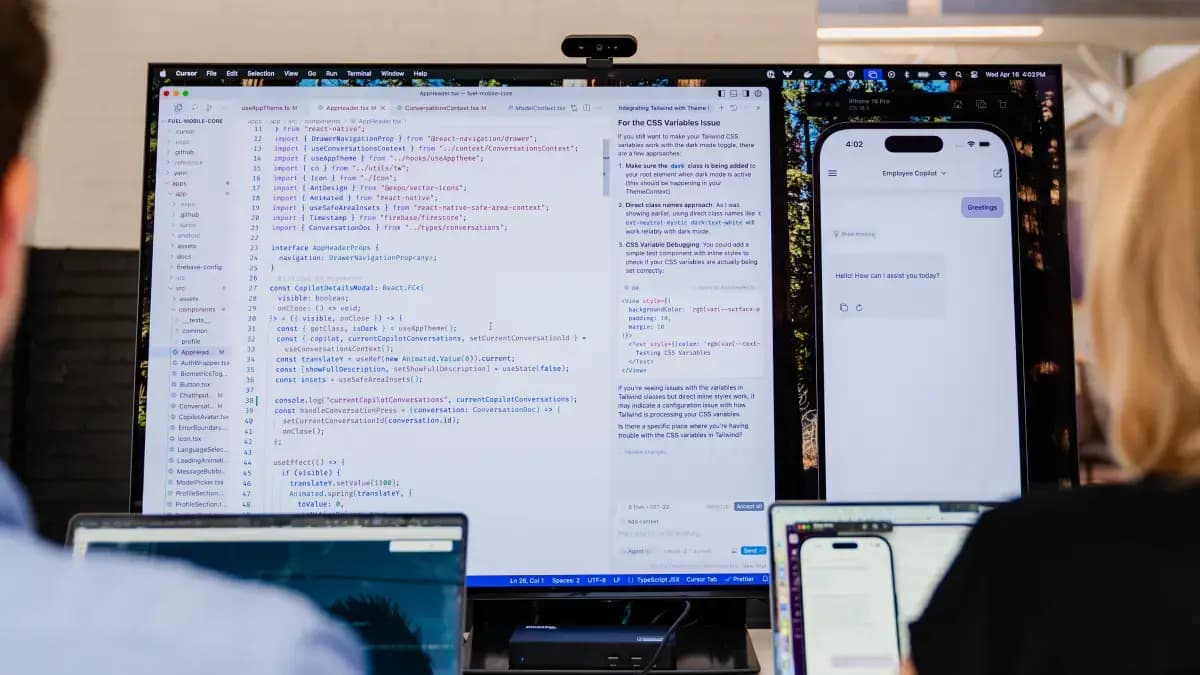

Alongside Fuel iX, our teams use Cursor, an integrated development environment (IDE) that brings powerful AI capabilities into a familiar VS Code environment, and Claude Code as an IDE-less alternative for teams who prefer an agentic coding workflow outside the editor. We also use Claude Cowork, a desktop tool that connects AI to productivity systems like Slack, Gmail, Jira, GitHub and Granola.

Critically, we've built a custom analytics dashboard that tracks how every team across the organization is actually leveraging these tools. While most companies are asking, "Are we using AI?" we're asking, "How effectively, and where are the gaps we can improve?"

AI across the full delivery lifecycle

Moving at the speed of AI requires removing the traditional friction points of handoffs and manual status checks. Here is how we’ve enhanced each stage of the lifecycle to turn AI into a force multiplier for every role on the squad, insulating against the scope creep that can occur when execution drifts away from strategy.

Product requirements: From client conversation to Jira

The requirements process is where many delivery cycles slow down or go sideways, and it's one of the areas where our AI integration pays off earliest.

When our teams meet with clients, those conversations are automatically transcribed using an AI notetaker. From there, an AI story generation agent takes the transcript and drafts user acceptance testing (UAT) tickets and user stories directly, with full awareness of existing Jira context and prior work. Those tickets are automatically pushed to Jira, with a human reviewing and refining them before anything moves forward. The heavy lifting of translating a conversation into structured, organized work items happens in minutes rather than days.

The approach to feature definition has also matured considerably. Rather than prompting AI to simply write a story, our product leads do the solution mapping first and then hand that mapped-out thinking to the agent to translate into well-structured stories. As a result, the AI is working from a richer starting point, which means the output requires far less rework. It's a meaningful shift in how product thinking and AI tooling interact.

We also leverage AI for large-scale operational tasks that traditionally consume days of manual effort, such as:

- Gap analysis: AI tooling compares and reconciles multiple versions of authoritative source documents over time, surfacing discrepancies to provide a clearer picture of actual requirements.

- Ticket recategorization at scale: When restructuring a body of work, AI crawls and analyzes months of ticket history, automatically remapping tickets to new parent epics and generating the necessary bulk assignment queries.

- Multi-source data analysis: Product leads routinely use AI to compare the current codebase, legacy code, requirements documentation and Figma designs simultaneously. This identifies gaps, traces flows and produces shared diagrams for implementation planning.

- Contextual project search: A custom project search tool sits across the project’s knowledge base, answering contextual questions about the work, decisions and codebase. This significantly accelerates ramp-up time for new team members.

Perhaps the most powerful product use case is the implementation audit. Our product leads use AI to consolidate all requirements into a single context and then scan the codebase to look for proof of implementation, identifying what's been built, what's in progress and what might be missing entirely. This kind of smoke test directly informs scope adjustments during milestone delivery, giving the team a grounded, evidence-based view of where things actually stand rather than relying on status updates alone.

Design: From Figma to the IDE

One of the most striking shifts we've seen is in how our designers work. Increasingly, they're not spending their days in Figma producing static mockups for handoffs. They're working directly in the IDE, creating prototypes with real code and skipping the mockup layer entirely. This allows designers to focus on UX logic and edge cases in a live environment rather than pixel-pushing in static frames.

This is more than a workflow change; it's a philosophical one. It means designers are shipping closer to the truth of what will actually be built, and it eliminates an entire category of handoff friction. When design lives in the same environment as development, the gap between intent and implementation closes dramatically.

TELUS Digital Staff Product Designer Karolina Whitmore shared with us what that means in practice:

- Using Cursor to identify and fix UI bugs in the live build by opening direct pull requests (PRs), bypassing the engineer routing step

- Using Playwright via MCP to test user flows and debug issues firsthand

- Testing accessibility and responsiveness directly in the browser, eliminating the need for multiple Figma frames

- Pulling Jira tickets directly via MCP, implementing the change and moving the ticket forward, all without leaving the IDE

The downstream effect on the engineering relationship is significant too. A designer who has explored the codebase, understands how components are structured and has debugged a few issues comes to conversations with engineers with a fundamentally different level of context. Those conversations are shorter, more precise and less likely to produce misaligned work.

Engineering: Context-aware development and review

Our engineering workflow is one of the most mature examples of AI integration we have. Developers work with multiple agents simultaneously, using Cursor or Claude Code to accelerate everything from initial implementation to debugging and documentation. The choice between the two comes down to preference:

- Cursor: For those who want AI woven into their editor experience

- Claude Code: For those who prefer working outside the editor experience

The more important innovation is context. By integrating Jira via MCP, our agents access the user stories behind the code they review — AI-assisted code review checks whether the implementation fulfills the business requirement, not just syntax. Our teams execute this process using multiple agents in parallel to select the best output, resulting in a review that is both broader in coverage and more consistent in quality.

We also integrate Figma via MCP, which gives our dev agents visibility into design intent. When a PR is opened, AI can flag discrepancies between what was built and what was designed and, in some cases, automatically make fixes. Every PR runs through AI-assisted review, with issues flagged and prioritized before a human reviewer ever looks at it. The result is a collaborative model where AI handles the first pass at scale, and humans make the final call with better information than they'd have had otherwise.

QA: Smarter testing at every stage

Our test engineers have found some of the most practical AI applications in the entire delivery cycle, particularly in these three areas where traditional approaches run out of steam:

- Root cause analysis: Agents analyze symptoms and cross-reference code history to surface hypotheses on difficult-to-trace bugs, narrowing down what used to take hours of manual investigation into a single focused session.

- Test suite construction: AI accelerates the scaffolding of repetitive, structurally similar test components across files, ensuring quality coverage while speeding up the building process.

- Knowledge building: AI acts as a learning accelerator for engineers on complex platforms, providing explanations of system components and best practices, thereby considerably compressing ramp-up time.

AI-powered people management

Perhaps the most underappreciated use case in our stack is what Claude Cowork enables for people managers. The workflow is genuinely different from anything most managers have experienced.

The foundation is Granola (or any AI notetaker with an MCP server), which captures notes from every meeting, including 1:1s. Claude Cowork connects to Granola, allowing a manager to ask natural-language questions across their entire meeting history. Critically, Claude Cowork integrates with Gmail, Jira, GitHub and Google Calendar, allowing a manager to provide standing context via a system prompt about their team members, projects and relationships.

With that context in place, the kinds of questions a manager can ask become genuinely powerful. A manager might ask Claude Cowork to pull the last four weeks of status reports from Gmail and correlate them with recent 1:1 notes and GitHub activity to surface any emerging risks or delivery gaps. They might ask it to look across all their 1:1 notes and flag themes that keep coming up, the kinds of signals that are easy to miss when you're moving quickly, but can also compound if left unaddressed.

Our managers typically automate two high-leverage scheduled tasks:

- Daily 1:1 preparation: The task processes end-of-day notes and updates a running document for each team member with suggested topics for the next 1:1. It also cross-references notes across different meetings (e.g., if a name comes up in a separate discussion) to ensure no detail is missed.

- Nightly executive calendar assistant: The task reviews the next day’s schedule, flags conflicts and can automatically reschedule invites, manage meetings and draft explanatory emails. This significantly reduces cognitive load for managers carrying full calendars.

The net effect is a manager who walks into every 1:1 prepared, who can spot team-wide trends before they become problems and who spends less time on calendar administration and status aggregation.

That's more time for the work that actually requires human judgment.

What drives results at TELUS Digital

Our AI-first methodology is generating measurable gains in delivery speed and cost efficiency. Teams are doing more with smaller headcounts, and as frontier models handle an increasing share of rote execution, we are seeing the quality bar for enterprise delivery reset at a higher level than ever before.

But the real driver isn't any single tool. It's the connective tissue: the fact that product, design, engineering and QA are all operating from a shared, AI-accessible context layer. When your code reviewer has access to the user story, the Figma design and the client conversation that inspired the feature, you're not just moving faster … you're building the right thing.

For our partners, this means we ship with a precision that minimizes the accumulation of technical debt. That’s the new bottom line of AI-first web and mobile app development: premium quality and reduced TCO, delivered at an increased velocity.

We're still early. But the shape of what's coming is clear. Check out our real-world client applications of AI-first delivery to learn how you can unlock the potential in your project backlog.

Adam Shea

Sr. Director, Engineering